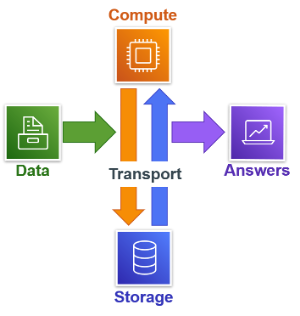

There are three basic, functional components of any computer platform: compute, storage, and transport. We know what compute and storage are. Transport is the function of moving data between storage and compute. Of these three the most expensive in terms of scalable resources is transport, more commonly referred as input/output (I/O).

Teradata Vantage's highly efficient management of transport with the

BYNET has been the cornerstone of Vantage’s success in linear scalability since the original, shared-nothing architecture was conceived.

In the simplistic messaging of cloud promotion, the trend has focused on the separation of compute and storage as well as the independent scaling of compute and storage. Rarely, however, is the critical nature of transport mentioned and this is the missing link.

Compute performs calculations on data and storage stores data, but that data must be moved from storage to compute first.

Why we need to pay attention to transport costs

Vantage appears expensive when compared to other platforms based solely on static compute and storage with no data movement. Once workloads are applied and data is transported between compute and storage, then the costs of transport takes an inverse shape. You will find that

other platforms grow more expensive than Vantage.

.png)

Simply examining scale-up incremental costs reveals an exponential pattern. Vantage, in orange, can scale up in linear increments to accommodate an increased workload. Another cloud platform, in blue, scales by a factor of two (2) for each increment. Also consider that this exponential scaling can be performed automatically with auto-scaling and the associated costs will quickly double, triple, and continue rapidly higher.

Make more effective use of what you buy

Scaling is not the only way to respond to increased workloads. A workload is defined by

Merriam-Webster as “

the amount of work performed or capable of being performed (as by a mechanical device) usually within a specific period.” Work can be measured in terms of queries per hour or query concurrency.

Alternative platforms cannot match the linear scalability and workload throughput of Vantage. Their solution is to break up the workload into multiple data warehouses and only focus on separation of compute and storage, as well as the independent scaling of compute and storage, thereby ignoring the complexity and cost of transport.

This solution simply offers exponentially increasing costs of compute and storage over time instead of thoughtful optimization of transport that would actually lower the usage costs.

Pay for what you use pricing

Besides being a more cost-effective solution, Teradata offers

consumption pricing that is based on what is actually used. Only customer-initiated queries and loads that are successfully completed are included in the pricing. Alternative platforms make you pay for the total provisioned instance while active, regardless of your actual usage.

Under the consumption pricing model, the price per unit remains consistent and Teradata takes care of scaling behind the scenes. Since consumption pricing has become available, Teradata customers have done the math and realized how much less expensive Vantage is for business critical workloads. This contrasts their painful experience when trying out alternatives that have overrun their expected budgets.

Wrap-up: include transport when pricing your analytic platform

You need to consider all three functional components of a computer platform: compute, storage, and transport. Workloads are based on the amount of work that can be performed in a period and work cannot be performed if data is not transported from storage to compute. Teradata's core capability in optimizing transport achieves linear scalability and maximized throughput to minimize cost as usage grows.

Pat Alvarado is Sr. Solution Architect with Teradata and senior member of the Institute of Electrical and Electronics Engineers (IEEE). Pat’s background originally started in hardware engineering and software engineering applying open source software for distributed UNIX servers and diskless workstations. Pat joined Teradata in 1989 providing technical education to hardware and software engineers, and building out the new software engineering environment for the migration of Teradata Database development from a proprietary operating system to UNIX and Linux in a massively parallel processing (MPP) architecture.

Pat presently provides thought leadership in Teradata and open source big data technologies in multiple deployment architectures such as public cloud, private cloud, on-premise, and hybrid.

Pat is also a member of the UCLA Extension Data Science Advisory Board and teaches on-line UCLA Extension courses on big data analytics and information management.

View all posts by Pat Alvarado